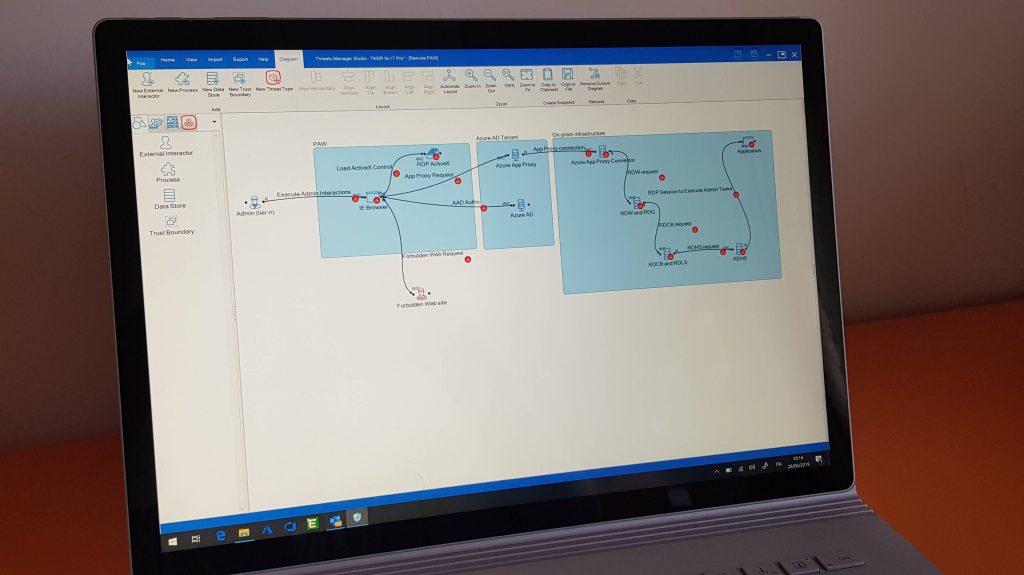

The vacations have finished, and they have been very productive. Hopefully, you will be able to ripe the benefits! I am happy to announce a new version of Threats Manager Studio: v1.5.2.

For most of us, this new version contains just a few fixes related to bugs on DevOps, TMT and another couple of issues I have been informed about. On that account, I would like to thank rsrinivasanhome for being tirelessly using TMS and communicating the problems she or he finds in it. You are very helpful, thank you!

But this new release in not only about bug fixing. The highlight is a new Extension introducing Quantitative Risk Analysis based on FAIR. This is the first time a free Threat Modeling tool supports Quantitative Risk Analysis!

The new Extension has been in the making for years, actually even before TMS was conceived in 2018. Until now, I haven’t found the time to complete it, and frankly it didn’t make much sense without a fully functioning foundation.

I’m going to create a page to document it soon, under Learning\Advanced Topics\Extensions, but for now I just want to provide you a few insights.

Using Quantitative Risk Analysis

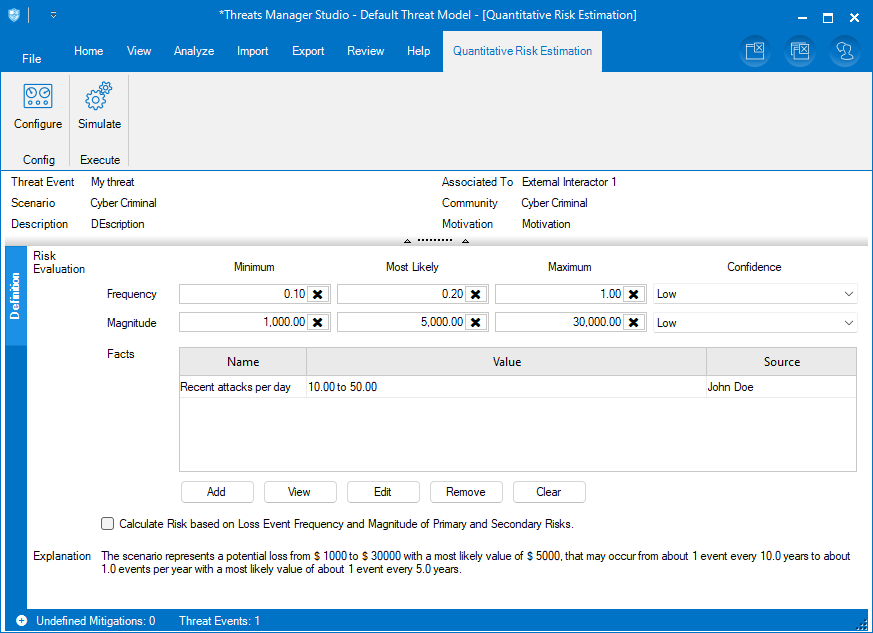

First of all, the new function is available in Pioneer Execution Mode. To access it, you have to create a Scenario from the Threat Event List: just click a Threat Event, then click the Add Scenario button in the ribbon, and fill your information in. Then you should see Evaluate risk quantitatively… in the context menu of the new Scenario. When you click it, you will be gratified by the Quantitative Risk Estimation panel. Initially, you will not be able to do much. To proceed, you’ll need to use the Configure button to

- specify the currency, if different from the one defined in the system (shown as default);

- select a Fact Provider, which is a storage for the facts you will use to document the rationale for your estimations;

- indicate a default Context for the facts, which typically will contain the name of the Project;

- specify the monetary Thresholds which will allow the tool to map the results to one of the Severity levels;

- select the ranges of interest for your estimation, expressed as percentiles;

- indicate the reference measure to use for calculating the Severity;

- and finally, specify the number of iterations for the Monte Carlo simulation.

If you do not understand something, do not worry. Most values are already provided with defaults. You should be able to fill in what is missing quite easily. And of course the documentation will explain it well, when ready.

Then you have to insert your estimations. FAIR defines three different levels of details: a top level where you define the Frequency and Magnitude of the loss, an intermediate level which allows to discriminate between the various forms of losses, and a third level which allows to go in depth with the analysis of the capabilities of the attacker. Threats Manager Platform – the Engine – supports all three, but for the sake of simplicity TMS exposes only the first two. By default you use the first, and you can switch to the second by checking the Calculate Risk based on Loss Event Frequency and Magnitude of Primary and Secondary Risks.

If you do everything right, you will be blessed by an Explanation like the one shown below. Check it to see if you have expressed correctly your intention, before further proceeding.

The tool allows to track facts to associate to every estimation you make: this will allow to provide a rationale and to make the results trustworthy. The facts are stored for now locally, under your Documents. In the future you will have the option to save them centrally within your Organization. This will allow to create a dictionary of facts which will accelerate the estimation to you and to your fellow Threat Modelers.

When you are happy with the parameters of the simulation you have inserted in the system, just click button Simulate in the ribbon. It will perform a Monte Carlo simulation and shows the results in a neat panel, as shown below.

This panel is structured as such:

- On the right, you can find the Annualized Risk Exposure chart. It shows how the potential losses over the year are distributed. You will see that the percentiles you selected in the Configuration are clearly highlighted, to show you where is the highest probability of falling. This graph is an essential tool for making decisions. The documentation will contain some information about how you can use it, but for now if you lack the knowledge to understand it you can simply skip it, because the Extension interprets it for you!

- On the upper left you find some statistics related to the simulation. If you do not understand them, do not worry and simply ignore them. Again, the Extension is able to read them for you!

- On the lower left you find the interpretation of the results in simple terms. This is what you need to understand, and this is what makes this tool so useful even for non-experts!

Given that the lower left corner is so important, let’s spend a couple of words on the results we have received from our sample simulation:

The scenario represents a potential annual loss which is between $ 704.08 (10th percentile) and $ 5443.96 (90th percentile), with a most probable value of $ 1069.06.

The confidence in this estimation is 80%.

The severity associated to the reference measure (Mode) is Low.

The scenario has already been updated with the estimated severity.

This first part should be simple enough to understand. It gives you a reference to evaluate the risk. Being an estimation, it may be wrong, therefore it even gives you a confidence level for the estimation, expressed as a percentage. The second part is related to the mapping of this estimation to the Severity. The Explanation in this case tells you that the estimated Severity is Low, due to the selected Thresholds and to the fact that the Mode, which is the most probable value, has been used as the reference measure.

The Extension takes the liberty to update the Severity of the Scenario automatically. This is how the Quantitative Risk Simulation result is fed back to the Threat Model. You’ll probably recall the Calculated Severity feature introduced recently with version 1.5.0: it has been updated to consider also the Severity of the Scenarios. The idea is that the initial Severity of the Threat Event is calculated as the maximum between the Severity of the associated Threat Type and the Severity of the associated Scenarios. Previously the algorithm considered only the Threat Type.

Let’s get an example, to clarify this concept. We have a Threat Type which has a Medium Severity, then we create a new Threat Event out of it, and then we associate two Scenarios to that Threat Event. The Quantitative Risk analysis on both Scenarios estimates their Severity being respectively Low and High. The maximum between those Severities is High, therefore the Calculated Severity algorithm considers the base Severity for the Threat Event being High, and not Medium as it was with version 1.5.0 or 1.5.1. So, now if you go to the Calculated Severity List panel from the Review ribbon, you will see that the Threat Event is highlighted because the current Severity is considered not correct, and High is suggested as the new value.

Next Steps

I hope that this description is enough to enable you to experiment with Quantitative Risk analysis. It is designed to provide you already more value, and enable more meaningful conversations with CxOs. But still, this is new and somewhat experimental, and we can get so much more value out of it! This is your chance to contribute with ideas on how evolve support for Quantitative Risk in Threat Modeling. So, please share your thoughts and concerns. Together, we will make Quantitative Risk Analysis a fundamental tool for next-gen Threat Modeling!

Happy Threat Modeling to everyone!

Leave a Reply